AI that actually saves your team hours.

We treat AI like a financial system: we measure whether it answers well, watch what it costs, and make it more accurate every day.

Positive tone.

87% confidence · 3 signals

Demo: lexicon-based · production: the same UI behind Llama / Claude / a custom finetune.

What we solve

- The AI makes things up, and you can't tell how often

- It costs a lot because nothing is optimised

- Nobody dares ship it · there's no way to measure quality

- Your support team is drowning in tickets

What we ship

- AI that answers from your own data · with sources

- Cheaper to run, picks the best model automatically

- Quality checks run automatically on every change

- Dashboard: what gets asked, what it costs, how good it is

What you get

Chatbot on your website that knows your company

Emails and proposals written automatically

All your documents searchable from one place

Runs on your own server · nothing leaks out

How we work on this

The same risk-reducing rhythm on every project · each step has a measurable deliverable.

- 0101 / 04

Data + workflow audit

We go through your data and the support / sales / ops workflows, and pinpoint where AI can actually save time.

- 0202 / 04

Retrieval MVP

End of week 1: a RAG pipeline prototype against your data, with source citations. We evaluate, not just demo.

- 0303 / 04

Agent + guardrails

Tool use, routing, rate limits, PII scrubber. Production evals in CI before every release.

- 0404 / 04

Live + tuning

Deploy, observability (LLM cost, latency, quality), weekly iteration driven by the dashboard.

Common questions

What most people ask · answered before you have to.

Yes. Llama, Mistral, Qwen deployments on your GPU or in your VPC. SOC2-friendly, your data never leaves the environment.

We build an eval set from your data. Golden-set + LLM-as-judge + factual regression tests run before every deploy. You get a trend dashboard.

Pilot in 2-4 weeks. Production in 4-12 weeks, depending on complexity. If you want a measurable KPI fast, 6 weeks is realistic.

Retrieval-grounded answers + citations + guardrails + refuse-to-answer. You won't get to zero, but 95%+ is avoidable and the rest is monitored.

Pilot from €4,000, production builds €15,000-€60,000 depending on scope, embedded retainer from €9,000/month. We scope a fixed price after a free 30-minute call · no open-ended hourly meter.

Yes · that's exactly what RAG is for. We index your PDFs, wikis, ticket history and contracts - Hungarian or English - into a vector store the model retrieves from. Your data is never used to train a public model.

You own all of it · code, prompts, eval sets, infrastructure. We hand over a runbook and can stay on a retainer for tuning, or step away cleanly. No licence fee, no lock-in.

Shipped work

202601 / 04Websites, web apps & online shops · Custom software · AI solutions

202601 / 04Websites, web apps & online shops · Custom software · AI solutionsVilya Protection is a SaaS platform built to protect public figures and large gatherings. Real-time threat assessment, tactical dashboards, 3D environmental visualisation, evacuation routing. The team reached out on LinkedIn. One week from brief, two tiny tweaks, then the public site and the demo dashboard went live.

what we shipped- Real-time threat assessment with severity classification

- Tactical dashboard for protection details

- 3D venue visualisation and evacuation route planning

what we usedNext.jsTypeScriptThree.jsWebGL 202602 / 04Custom software · Websites, web apps & online shops · AI solutions

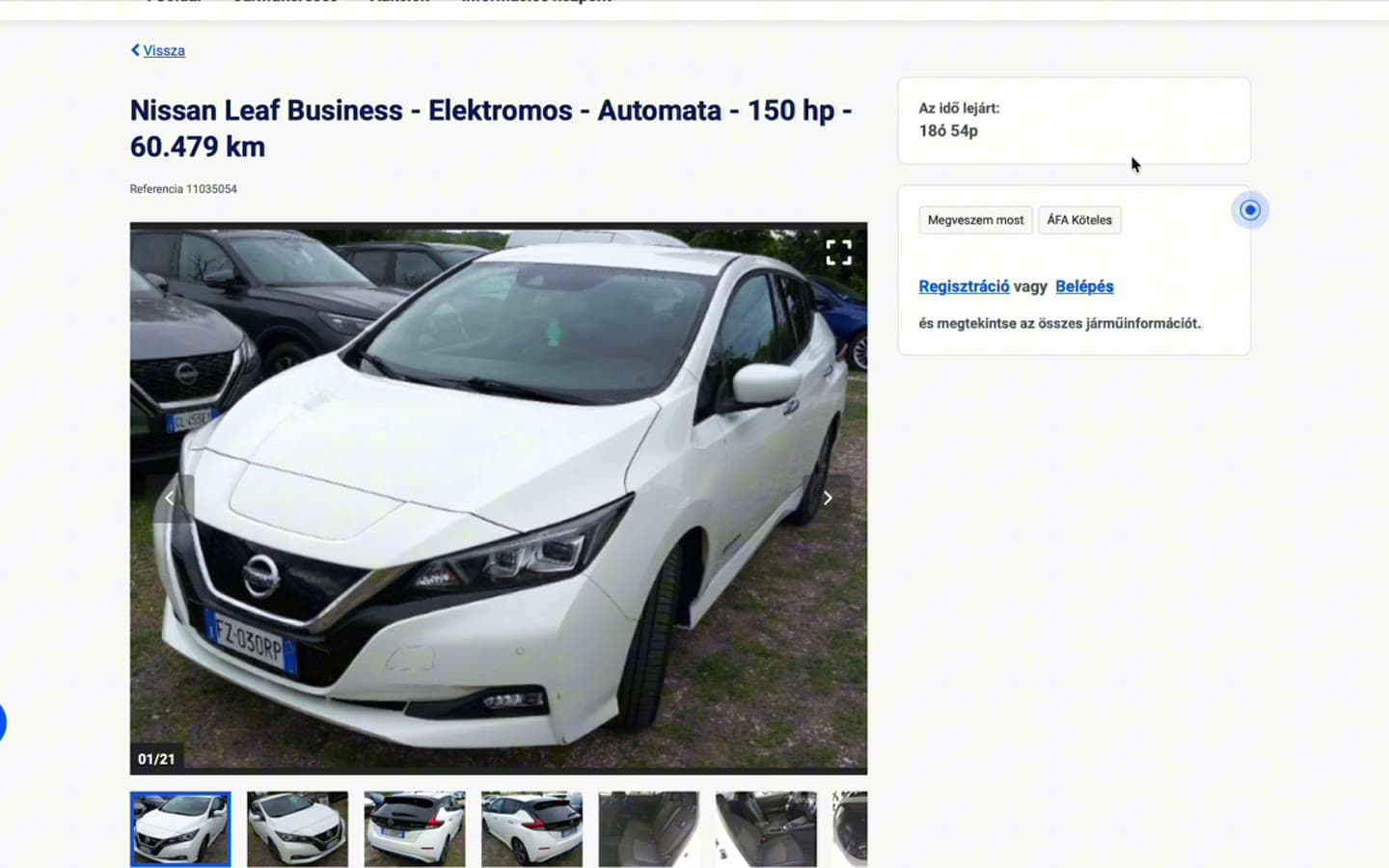

202602 / 04Custom software · Websites, web apps & online shops · AI solutionsAutoImport scrapes EU B2B car-auction Buy-Now lots, matches each lot against real Hungarian used-car listings, and surfaces only vehicles where the verified resale margin clears every cost - transport, buyer fees, Hungarian registration tax. New deals push to a private Telegram channel as a swipeable photo carousel.

what we shipped- Live EU auction scraper (Openlane Buy-Now)

- Comp-matched against Hungarian retail listings

- Landed-cost margin · transport + fees + reg tax

what we usedNext.jsTypeScriptPostgresPlaywrightTelegram 202603 / 04AI solutions · Websites, web apps & online shops · Custom software

202603 / 04AI solutions · Websites, web apps & online shops · Custom softwareClarixAI reads open-ended student answers (Dutch, English, Hungarian) and surfaces the misconception patterns dominating a class - concept confusion, surface-pattern matching, missing precondition, and six others - with a concrete pedagogical action card per pattern. It does not grade students; it grades the class's reasoning. A per-language multi-task transformer ensemble plus HDBSCAN clustering finds structural patterns across questions.

what we shipped- Multi-task misconception classifier · NL · EN · HU

- 8 reasoning-error meta-tags · with a teacher action per tag

- HDBSCAN clustering for cross-question structural patterns

what we usedPythonPyTorchTransformersFastAPIReact 202604 / 04AI solutions · Websites, web apps & online shops · Mobile apps

202604 / 04AI solutions · Websites, web apps & online shops · Mobile appsPulls data from smart watches, fitness bands, and other sources (heart rate, sleep, steps, oxygen), correlates with AI, and recommends treatment, medication, or lifestyle changes. Web and mobile UI, with an API.

what we shipped- Wearable data ingestion

- AI analysis and trend watching

- Treatment and lifestyle suggestions

what we usedExpressReact-ReduxSQLiteOpenRouter

AI solutions, city by city

Budapest-based studio · we deliver to the cities and regions below, remote-first with on-site on request.

Let's get started.

Send an email or book a 30-minute call.

More services